Reinforcement learning for robust locomotion in a custom MuJoCo Hopper environment with domain randomization, curriculum learning, and entropy scheduling.

Team: Ali Vaezi · Joseph Fayyaz · Parastoo Hashemi · Sajjad Shahali

- Overview

- Repository Structure

- Environments and Randomization

- Algorithms Implemented

- Setup

- Training

- Results

- Citation

- License

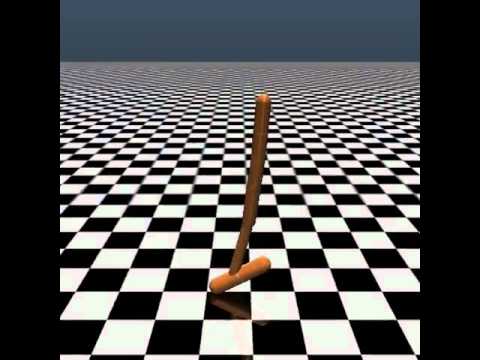

This repository presents a comprehensive study on reinforcement learning (RL) algorithms applied to a custom MuJoCo Hopper environment. The project aims to build robust locomotion policies under uncertain dynamics using:

- Classic Policy Gradient Methods: REINFORCE, Actor-Critic

- Advanced On-Policy Algorithms: Proximal Policy Optimization (PPO)

- Robustness Techniques: Domain Randomization (UDR), Curriculum Learning (CDR), Entropy Scheduling (ES)

.

├── src/

│ ├── env/ # Custom MuJoCo Hopper environment

│ ├── evaluation/ # Evaluation utilities and scripts

│ └── training/ # Training scripts for all agents

├── Logs/ # Training logs and episode returns

│ ├── actor_critic/

│ ├── Learning_Curve/

│ ├── PPO_robustness/

│ └── PPO_sweep/

├── models/ # Saved model checkpoints

│ ├── actor_critic/

│ ├── PPO/

│ └── reinforce_baseline/

├── render/ # Visual results (GIF, MP4, plots)

├── requirements.txt

├── CITATION.cff

├── LICENSE

└── README.md

The environment is based on a custom subclass of the MuJoCo Hopper (custom_hopper.py), extended with:

- Parameter Randomization: friction, damping, body mass, initial state

- Domain Randomization:

- Uniform DR (UDR): randomized every episode

- Curriculum DR (ES-CDR): difficulty scaled with agent performance and return entropy

| Algorithm | Description |

|---|---|

| REINFORCE | Monte Carlo policy gradient with optional baseline |

| Actor-Critic | TD-based policy/value method |

| PPO | Clipped surrogate objective with GAE (Stable-Baselines3) |

| UDR | Domain variation with uniform sampling |

| ES-CDR | Return entropy-driven difficulty adjustment |

Install the required packages:

pip install -r requirements.txtYou need MuJoCo 2.1+ properly installed and licensed. Refer to the MuJoCo installation guide.

From the root directory:

# REINFORCE

python src/training/Train_Reinforce_vanila.py

# REINFORCE with baseline

python src/training/Train_Baseline.py

# Actor-Critic

python src/training/Train_Actor_Critic.py

# PPO + UDR + ES-CDR

python src/training/PPO_UDR_ES_CDR.py --Domain cdr --Entropy_Scheduling True --seed 0python src/training/PPO_Hyperparameter_Calculation.pyCurriculum DR (CDR) gradually increases the range of domain parameters (e.g., torso mass, friction) during training, helping the agent first master simple dynamics, then adapt to complex scenarios.

Entropy Scheduling (ES) monitors the policy's return entropy. When the agent is confident (low entropy), it advances the curriculum level.

python src/training/PPO_UDR_ES_CDR.py --Domain cdr --Entropy_Scheduling True --seed 0| Curriculum Level | Mean Return | Std Dev | Return Entropy |

|---|---|---|---|

| 1 | 820 | ±50 | 1.02 |

| 2 | 710 | ±70 | 1.30 |

| 3 | 665 | ±85 | 1.48 |

Training metrics are saved as CSV files in the Logs/ directory. To visualise:

python evaluation/plot_csv_scripts/plot_metrics.pyIf you use this code, please cite:

@software{vaezi2025sim2real,

title = {Sim-to-Real Reinforcement Learning for MuJoCo Hopper},

author = {Vaezi, Ali and Fayyaz, Joseph and Hashemi, Parastoo and Shahali, Sajjad},

year = {2025},

url = {https://github.com/aliivaezii/sim2real}

}This project is licensed under the MIT License. See LICENSE for details.